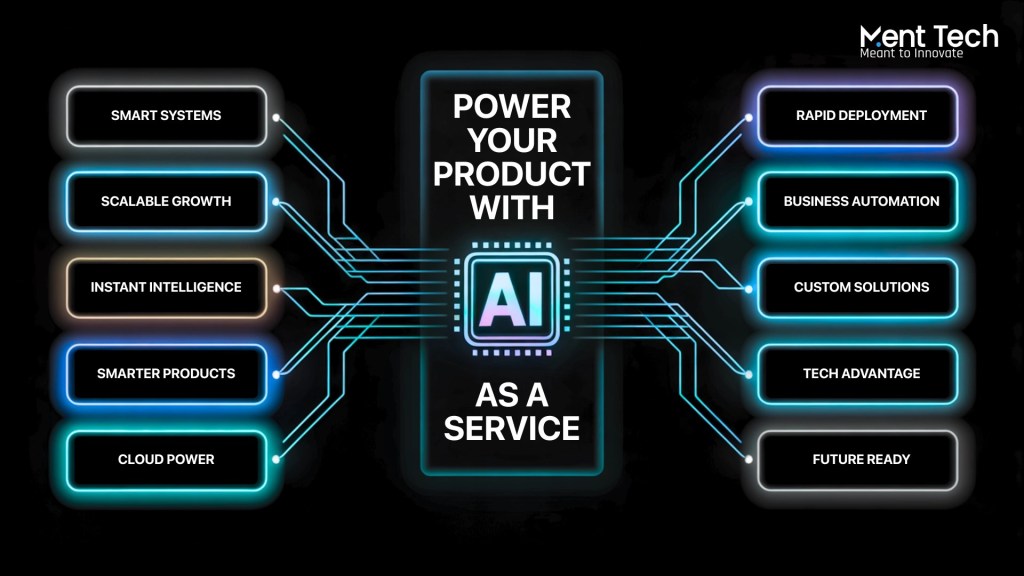

In the evolving world of artificial intelligence, the intersection of large language models, real-time interactions, and creative machine output has ushered in a transformative era. Businesses, developers, and product teams are now exploring advanced implementations of Prompt Engineering & Optimization, Adaptive AI Development, LLM Development, Conversational AI and Chatbot Development, and Generative AI Integration Services to build next-generation digital experiences.

This article explores the core aspects of these five foundational pillars, how they contribute to intelligent system design, and how companies can benefit from harnessing their combined potential in practical use cases.

Understanding the Role of Prompt Engineering & Optimization

At the heart of any interaction with a generative AI model lies the Prompt Engineering & Optimization process. This technique involves crafting precise instructions, queries, or inputs that guide large language models to produce accurate, meaningful, and contextually relevant responses.

The evolution of prompt engineering goes far beyond simple question formulation. It now involves layered structures, system instructions, chaining mechanisms, and prompt testing frameworks to fine-tune results. Optimized prompts reduce token wastage, enhance relevance, and boost model reliability—critical in use cases like legal document review, customer support automation, code generation, and research summarization.

For example, companies building intelligent search engines or workflow automation tools rely heavily on optimized prompting to ensure user requests are interpreted correctly. Moreover, multi-modal models now require prompts that combine image, text, and audio instructions, making prompt design a multidisciplinary task.

Adaptive AI Development for Real-Time Decision-Making

Traditional AI systems are often rule-based or trained on static datasets, which can limit their responsiveness to real-world change. That’s where Adaptive AI Development comes in.

This approach focuses on building systems that can dynamically respond to new data, user feedback, and environmental shifts. Adaptive AI learns continuously, updating its behavior based on both structured and unstructured data inputs. These models support continuous retraining and fine-tuning, allowing them to remain relevant even as patterns evolve.

In sectors like finance, healthcare, logistics, and retail, adaptive AI is essential for anomaly detection, personalized recommendations, fraud prevention, and demand forecasting. For instance, an adaptive AI system can detect emerging fraudulent patterns in transaction data and instantly update its internal logic without requiring manual retraining.

In software applications, adaptive AI powers features like smart email sorting, behavioral nudges in productivity tools, or AI-based quality checks in creative platforms.

LLM Development: Customizing Intelligence at Scale

The surge of large language models such as GPT, Claude, and Gemini has made LLM Development a critical area for AI-focused teams. While general-purpose models are powerful, businesses often require domain-specific intelligence, security compliance, and performance tuning, which makes LLM customization essential.

LLM Development involves selecting foundational models, training or fine-tuning them on proprietary datasets, implementing guardrails, and evaluating performance. This enables organizations to develop AI models that understand their unique workflows, terminology, and goals.

Healthcare firms may develop LLMs trained on clinical research papers to support medical reasoning. Legal firms can build models that specialize in contract analysis, while edtech platforms might fine-tune LLMs to generate adaptive learning paths for students.

Another advantage is cost-efficiency. Organizations can train smaller-scale LLMs optimized for specific tasks, reducing the inference cost and latency associated with general-purpose APIs.

A successful LLM Development strategy also incorporates human feedback loops, retrieval-augmented generation (RAG), and integration with databases or enterprise knowledge graphs.

Conversational AI and Chatbot Development: Building Human-Like Interactions

Conversational AI and Chatbot Development remains one of the most adopted applications of AI across industries. These systems are designed to engage in natural, multi-turn conversations, helping users find information, solve problems, or complete tasks.

Earlier chatbot solutions relied on flow-based logic or simple NLP. Today’s conversational AI tools, built using LLMs and advanced NLU pipelines, offer contextual understanding, emotional intelligence, and multilingual capabilities. They can handle ambiguous queries, remember previous inputs, and personalize experiences.

In e-commerce, conversational agents assist in product selection, order tracking, and returns. In enterprise IT, they power helpdesk automation. Healthcare chatbots can offer symptom checks and appointment scheduling, while HR bots streamline onboarding and policy queries.

When building advanced Conversational AI and Chatbot Development systems, developers need to ensure seamless integration with back-end APIs, CRMs, and support platforms. Voice interfaces, emotion detection, and visual UI elements like cards or carousels further enhance user engagement.

Training these bots also involves constant refinement, persona design, dialogue testing, and compliance checks—especially in sectors where accuracy and privacy are critical.

Generative AI Integration Services: From Vision to Deployment

To operationalize all the above technologies in real-world environments, organizations increasingly rely on Generative AI Integration Services. These services go beyond experimentation, helping businesses deploy generative AI at scale, integrated with their existing tools, processes, and infrastructure.

From embedding AI into web and mobile apps to automating document generation and creative workflows, generative AI is becoming a key productivity driver. Design firms use it to generate digital assets, marketing teams generate ad copies or product descriptions, and software teams automate documentation.

Generative AI Integration Services often cover:

- API integration with platforms like OpenAI, Anthropic, Mistral, or Meta

- Deployment of models in private cloud or on-prem environments

- UI/UX design for AI-native products

- Model orchestration, caching, and latency optimization

- Enterprise-grade security and access controls

- Evaluation metrics for generated outputs

These services allow businesses to move fast while keeping control over quality, brand tone, and user experience. As regulation increases, integration partners also help ensure that ethical use, auditability, and transparency are built into every generative pipeline.

Bringing It All Together: Intelligent Ecosystems

Each of the five highlighted areas Prompt Engineering & Optimization, Adaptive AI Development, LLM Development, Conversational AI and Chatbot Development, and Generative AI Integration Services plays a distinct role in building intelligent, scalable, and responsive digital ecosystems.

An e-learning platform, for example, might use adaptive AI to personalize content, LLM development to generate explanations, conversational AI for student interactions, prompt optimization for backend logic, and integration services to embed all these seamlessly into their app.

Similarly, a fintech startup could use these technologies to automate investor support, generate reports, perform document KYC checks, and answer compliance queries using AI agents trained on internal policies.

Organizations investing in this full stack of AI capabilities position themselves to unlock new efficiencies, improve customer satisfaction, and explore creative possibilities that were not feasible before.

Future Outlook and Considerations

As AI technologies mature, a few considerations will shape the roadmap for enterprises:

- Privacy and compliance: Secure data handling, consent management, and audit logs will be essential, especially with adaptive and generative systems.

- Multi-modal capabilities: Systems will move from text-only to combinations of text, image, audio, and video.

- Model interoperability: Tools that allow switching between or combining different LLMs will increase.

- Real-time inference: Speed and responsiveness will be differentiators in live applications.

- AI agents and autonomy: Advanced use cases will move beyond chatbots to autonomous agents that can act, schedule, purchase, or coordinate tasks.

Choosing the right mix of tools, models, and partners will determine long-term success in the AI era.

Final Thoughts

The journey to building intelligent applications is no longer just about training a model it’s about architecting an adaptive, scalable, and human-centered ecosystem. With strategic investment in Prompt Engineering & Optimization, Adaptive AI Development, LLM Development, Conversational AI and Chatbot Development, and Generative AI Integration Services, organizations can unlock breakthrough capabilities across industries.

The real opportunity lies not just in adopting AI but in aligning it with business goals, user needs, and operational realities. And as these technologies continue to evolve, the businesses that embrace intelligent systems today will define the user experiences of tomorrow.